If you spend any time in conversations about artificial intelligence and financial services, you’ll notice that they tend to follow a pattern. Someone mentions faster execution. Someone else raises smarter signals. Customization comes at scale. Everything is frictionless. Everyone nodded. It’s not that any of it is wrong. It’s skipping the really important part.

I’ve worked in financial services long enough to know that the interesting questions about any new technology rarely revolve around what it can do. It’s about what happens when things happen in the real world, in the hands of people with very different levels of experience, making decisions under real uncertainty. This is the conversation we should be having about AI now. I don’t think we’re there yet.

Singapore Summit: Meet the top APAC brokers you know (and those you don’t know yet!)

What is already in the room

Let’s be clear about one thing: AI is not involved in trading. It’s already here. It’s been here for a while. They are unevenly distributed and not always well understood.

At the institutional level, this is not news. Algorithmic and AI-driven implementation, real-time sentiment analysis, and high-frequency pattern recognition have been standard practice for years. The latest is applying big language models to unstructured data: earnings call transcripts, regulatory filings, and news streams.

Capital.com UK CEO Robert Osborne and other business leaders in the House of Lords to discuss artificial intelligence

The ability to process and synthesize this type of material faster than any human team can truly change how institutional research and risk assessment work. This is a meaningful shift.

For retail, the change is more visible, but perhaps less studied. AI-powered charting toolsPersonalized market summaries, automated alerts, and in-app education: these have become fairly standard across most major platforms. But what is less obvious, and perhaps more important, is what happens in the background – the automation of decision making, suitability assessments, and the detection of unusual trading patterns that might indicate a problem. This is where AI does some of its most important work, quietly, without much fanfare.

What’s coming

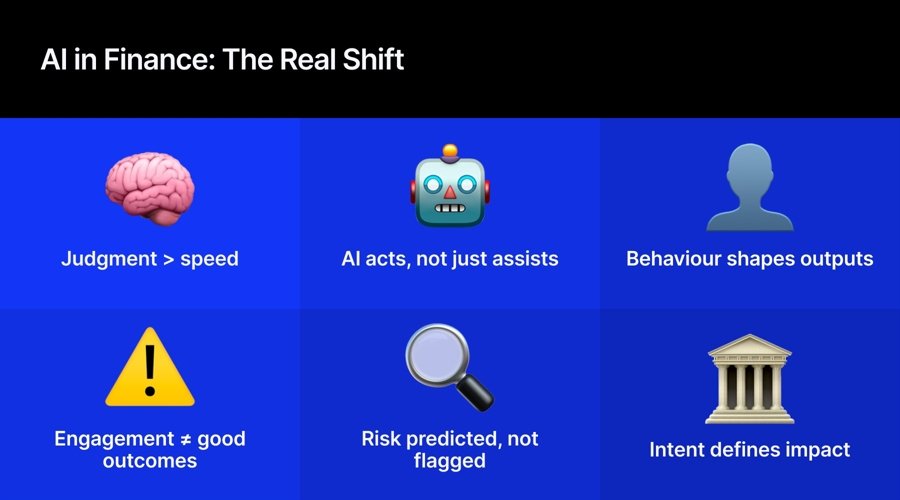

The next wave is less about implementation and more about governance. Amnesty International agent It’s what I watch closely.

The ability of AI systems to take a series of actions on their own, searching, evaluating and acting without needing human guidance at each step, is already being tested in enterprise environments.

For retail, the implications are significant and have not yet been fully resolved. An AI system that monitors the portfolio and alerts when something changes materially is one thing. The AI deciding what to do about it is another thing entirely. This distinction is important, and the industry needs to think carefully about where to draw the line.

Customization is the other big thing. A combination of behavioral data, trading history and artificial intelligence modeling It produces systems that can truly adapt to individual users in ways not previously possible. For financial education, which I care a lot about, this is really exciting. The ability to provide the right context to the right person at the right moment, rather than generic content that may or may not arrive, can change how people interact with markets in a real and lasting way.

Risk management is moving from detection to prediction as well. Identify patterns that tend to precede bad outcomes, rather than simply pointing out them after the fact. For anyone serious about customer protection, this is one of the most important things on the horizon.

The thing that keeps me up at night

The same capabilities that make AI really useful in the hands of a well-run platform also make it really dangerous in the hands of a platform that isn’t.

AI optimized for engagement rather than outcomes could learn, very efficiently, how to keep people trading, even when it is not in their best interest. You will be shown content that motivates rather than informs. He will personalize in ways that exploit rather than confront the biases he identifies.

AI does not change motivation; It makes implementation more precise. Whether AI accelerates the good version of what platforms can do, or the bad version, depends entirely on intent and governance. That’s it.

We discussed the ruling at length in the House of Lords this week.

The question is not whether AI can increase the amount of information available to people. Rather, it is a question of whether it improves the quality of the decisions they make with this information. These are really different problems.

The governance frameworks that are built now, in regulation, in business practice, and in how platforms are designed, will determine which ones get resolved. The FCA’s consumer duty is a step in the right direction. Requiring companies to show good results rather than simply disclose risks creates real accountability for how AI is used. But organization sets the ground. What happens above it is up to us.

The companies that gain trust in the long term are those that treat governance as a design principle, not a compliance exercise, and embrace artificial intelligence that makes people better at making decisions. Not only is it faster to make.

If you spend any time in conversations about artificial intelligence and financial services, you’ll notice that they tend to follow a pattern. Someone mentions faster execution. Someone else raises smarter signals. Customization comes at scale. Everything is frictionless. Everyone nodded. It’s not that any of it is wrong. It’s skipping the really important part.

I’ve worked in financial services long enough to know that the interesting questions about any new technology rarely revolve around what it can do. It’s about what happens when things happen in the real world, in the hands of people with very different levels of experience, making decisions under real uncertainty. This is the conversation we should be having about AI now. I don’t think we’re there yet.

Singapore Summit: Meet the top APAC brokers you know (and those you don’t know yet!)

What is already in the room

Let’s be clear about one thing: AI is not involved in trading. It’s already here. It’s been here for a while. They are unevenly distributed and not always well understood.

At the institutional level, this is not news. Algorithmic and AI-driven implementation, real-time sentiment analysis, and high-frequency pattern recognition have been standard practice for years. The latest is applying big language models to unstructured data: earnings call transcripts, regulatory filings, and news streams.

Capital.com UK CEO Robert Osborne and other business leaders in the House of Lords to discuss artificial intelligence

The ability to process and synthesize this type of material faster than any human team can truly change how institutional research and risk assessment work. This is a meaningful shift.

For retail, the change is more visible, but perhaps less studied. AI-powered charting toolsPersonalized market summaries, automated alerts, and in-app education: these have become fairly standard across most major platforms. But what is less obvious, and perhaps more important, is what happens in the background – the automation of decision making, suitability assessments, and the detection of unusual trading patterns that might indicate a problem. This is where AI does some of its most important work, quietly, without much fanfare.

What’s coming

The next wave is less about implementation and more about governance. Amnesty International agent It’s what I watch closely.

The ability of AI systems to take a series of actions on their own, searching, evaluating and acting without needing human guidance at each step, is already being tested in enterprise environments.

For retail, the implications are significant and have not yet been fully resolved. An AI system that monitors the portfolio and alerts when something changes materially is one thing. The AI deciding what to do about it is another thing entirely. This distinction is important, and the industry needs to think carefully about where to draw the line.

Customization is the other big thing. A combination of behavioral data, trading history and artificial intelligence modeling It produces systems that can truly adapt to individual users in ways not previously possible. For financial education, which I care a lot about, this is really exciting. The ability to provide the right context to the right person at the right moment, rather than generic content that may or may not arrive, can change how people interact with markets in a real and lasting way.

Risk management is moving from detection to prediction as well. Identify patterns that tend to precede bad outcomes, rather than simply pointing out them after the fact. For anyone serious about customer protection, this is one of the most important things on the horizon.

The thing that keeps me up at night

The same capabilities that make AI really useful in the hands of a well-run platform also make it really dangerous in the hands of a platform that isn’t.

AI optimized for engagement rather than outcomes could learn, very efficiently, how to keep people trading, even when it is not in their best interests. You will be shown content that motivates rather than informs. He will personalize in ways that exploit rather than confront the biases he identifies.

AI does not change motivation; It makes implementation more precise. Whether AI accelerates the good version of what platforms can do, or the bad version, depends entirely on intent and governance. That’s it.

We discussed the ruling at length in the House of Lords this week.

The question is not whether AI can increase the amount of information available to people. Rather, it is a question of whether it improves the quality of the decisions they make with this information. These are really different problems.

The governance frameworks that are built now, in regulation, in business practice, and in how platforms are designed, will determine which ones get resolved. The FCA’s consumer duty is a step in the right direction. Requiring companies to show good results rather than simply disclose risks creates real accountability for how AI is used. But organization sets the ground. What happens above it is up to us.

The companies that gain trust in the long term are those that treat governance as a design principle, not a compliance exercise, and embrace artificial intelligence that makes people better at making decisions. Not only is it faster to make.